Bio

I am a Research Scientist at

Google AR Perception.

I was a Lead Research Scientist in the

Creative Vision group at

Snap Research.

I got my Ph.D. degree from

the Graphics & Parallel Systems Lab (GAPS),

Zhejiang University in 2017, supervised by Professor

Kun Zhou.

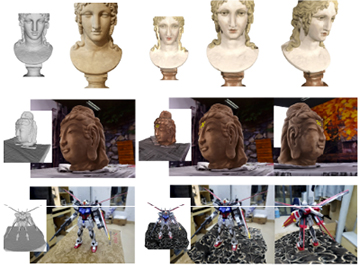

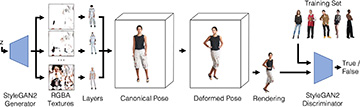

My research interests lie at the intersection between Computer Vision and Computer Graphics,

especially human digitization, image manipulation, 3D acquisition, and physics-based animation.

I am looking for self-motivated interns in CV&CG. Drop me an email if you are interested.

Interests

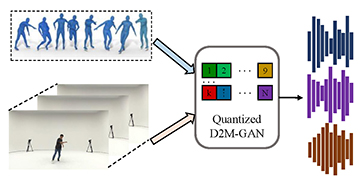

- Human Digitization

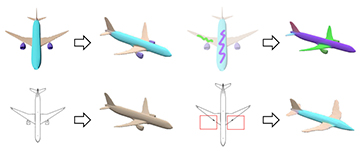

- Image Manipulation

- 3D Reconstruction

- Physics-Based Animation

Education

-

PhD in Computer Science, 2017

Zhejiang University

-

BSc in Computer Science, 2011

Zhejiang University